You’ve been paying for Power BI for three years. Your team uses it to build the same five dashboards, refresh them every Monday morning, and paste screenshots into PowerPoint for the board meeting. Somewhere along the way, the tool that was supposed to give you clarity became just another line in your SaaS invoice — one nobody questions, because questioning it would mean admitting you’re not sure what you’re getting from it.

In 2026, that conversation is finally happening. And the answer some companies are landing on is surprising: the most powerful shift in Business Intelligence isn’t coming from a new vendor. It’s coming from a different way of thinking about the problem entirely.

Table of Contents

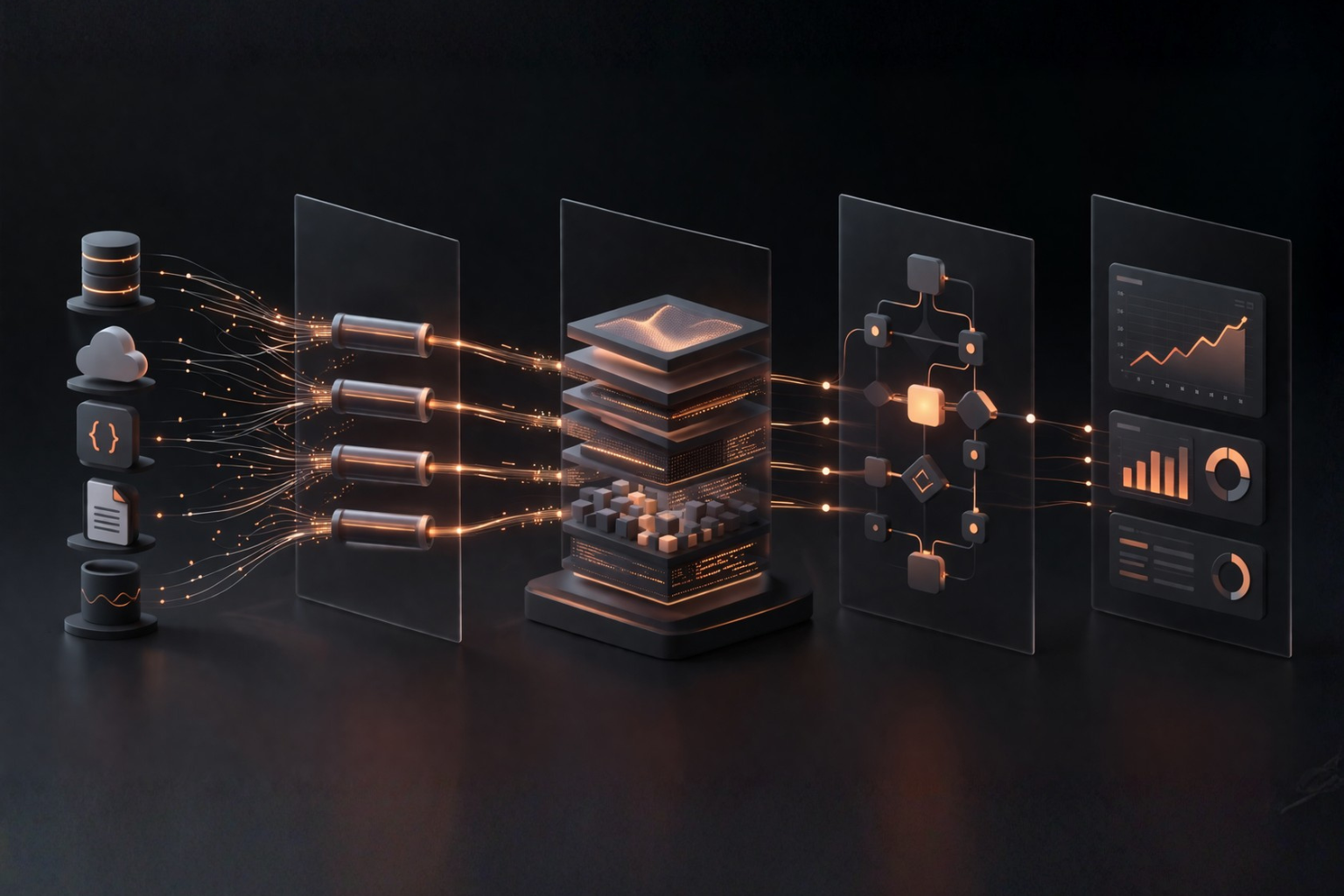

What a modern BI system is made of

To compare tools sensibly, you first need to separate their roles. Otherwise, it becomes very easy to put fundamentally different things into the same category.

The first area is data storage and query execution. This includes tools such as PostgreSQL, ClickHouse, DuckDB, and Trino. Some work well as analytical databases, some are better for local analysis, and others are really a query layer over multiple sources.

The second area is extracting and loading data from source systems. On the open source side, Airbyte is an important reference point, while on the managed-service side Fivetran is a common comparison.

The third area is data transformation and modeling, which turns raw tables into something stable enough for consistent reporting. Here, dbt and SQLMesh matter most.

The fourth area is orchestration, meaning scheduling and supervising data jobs. The classic reference point here is Apache Airflow.

The fifth area is reporting and self-service BI. On the open source side, teams most often evaluate Metabase, Apache Superset, and Lightdash, while on the commercial side the reference points are usually Power BI, Tableau, and Looker.

Only after separating those roles does it become clear that open source BI is not a single product. It is a set of separate decisions that have to be made layer by layer.

What makes sense in each area

Data warehouse, analytical database, and query layer

This is the foundation of the whole setup. Choices made here affect query performance, scaling, and the cost of further development.

PostgreSQL remains a very strong starting point. The project has nearly 40 years of active development behind it, and the official site shows current updates for supported versions in 2026 as well. For small and mid-sized analytical workloads, PostgreSQL can be entirely sufficient, especially if the team already knows it well.

ClickHouse is a column-oriented database designed for OLAP analytics. It tends to perform well when data volumes grow and query workloads become heavier. It offers strong performance but usually demands a more deliberate approach to modelling, and operations than managed services do.

DuckDB works extremely well for local analysis, file-based workflows, and quick prototyping. At the same time, it is important to remember the concurrency limits described in the documentation: one process can read and write to the database, multiple processes can read, but then none can write, and multiple writers are supported only inside a single writing process. That makes DuckDB an excellent tool for an analyst, but not a natural choice for a central shared database used by many people at once.

Trino serves a different purpose than a classic warehouse. It is a distributed SQL query engine that lets organisations query data where it already lives. It makes sense when data is spread across many systems and the company wants a common access layer without moving everything into one place.

On the commercial side, the main reference points are Snowflake and BigQuery. Their advantage is convenience: maintenance, updates, and much of the tuning stay with the provider. The price for that convenience is less architectural control and deeper dependence on the service model.

The conclusion is straightforward: open source makes sense in this area when a company truly needs more control or has requirements that a convenient managed service cannot reasonably meet. If the priority is fast delivery and low operational overhead, a managed model is often the better choice.

Extracting and loading data

In practice, this area is about how data gets into the warehouse and how much work it takes to keep source connections running.

Airbyte advertises more than 600 pre-built connectors, and Airbyte Core can be deployed as a self-managed system. For many teams, that is attractive because it offers more control over data movement without having to build everything from scratch.

It also matters that Airbyte Enterprise Flex separates the management layer from the part that actually transfers data inside the customer’s infrastructure. In multi-region deployments, workspaces can be mapped to specific regions, and the documentation says clearly that data in such a workspace is moved only through the assigned processing environment. That is a real argument for organizations that need tight control over where data is processed.

At the same time, this choice does not remove operational work. Anyone who self-hosts such a system also takes responsibility for monitoring, upgrades, connector failures, and environment maintenance. This is where the main rule of open source BI becomes visible: more control usually means more day-to-day responsibility.

Data transformation and modeling

This is the area that often determines the actual quality of analytics. Without it, a company usually ends up with an unstructured collection of tables and SQL queries. With it, the company can have consistent metric definitions, quality tests, and a logical data model.

dbt is the main reference point here. Its documentation emphasizes that it brings software engineering practices into analytics work: version control, testing, modularity, CI/CD, and documentation. The installation documentation also states clearly that dbt Core remains an open source project under the Apache 2.0 license.

SQLMesh moves in a similar direction, but places stronger emphasis on change planning and impact analysis before execution. For some teams, that can be a very sensible alternative.

This is also one of the areas where open source performs especially well. It is possible to build a mature transformation layer without buying a full commercial platform, as long as the team works with the discipline required by code, tests, and deployment processes.

Orchestration

Apache Airflow is an open platform for scheduling, running, and monitoring batch workflows. Workflows are defined in Python, and the web interface helps teams follow dependencies and troubleshoot problems.

On paper, that sounds simple. In practice, you still need to operate the scheduler, metadata database, workers, and the rest of the surrounding production setup. That is why Airflow fits organizations that want that control in-house or already have a team capable of operating such a platform. If not, managed variants of this type of solution are often the more sensible route.

Reporting and self-service BI

This is the area most visible to end users, and at the same time the one where the difference between open source and commercial tools is often the most noticeable.

Metabase Open Source can be run as an official Docker image or a standalone JAR file. It is relatively easy to start with. But the Metabase self-hosting documentation also lists the components needed for production operation: high-availability servers, a load balancer, an application database, SMTP, backups, monitoring, and SSL certificates. The infrastructure costs shown there start at roughly 112–132 dollars per month before team time is added.

Apache Superset offers more flexibility and broad data exploration capabilities, but the Superset quickstart explicitly says that Docker Compose is intended for quick starts and sandbox or development environments, not recommended for production. The production documentation points further toward Kubernetes-based deployments.

Lightdash is especially interesting for organizations already working heavily with dbt. At the same time, the Lightdash documentation is equally clear that secure production self-hosting requires strong knowledge of Docker, Kubernetes, and security, and that for most teams the cloud version is the recommended route.

On the commercial side, Power BI, Tableau, and Looker still have an advantage where user convenience, product maturity, and wide support for larger business audiences matter most. That is why reporting is the area where it is worth calculating very carefully whether self-hosting really pays off.

What open source really gives you, and what it only promises

The strongest argument for open source is still control. A company can choose its own infrastructure, understand the boundaries of the solution more precisely, and avoid accepting the full logic of a single vendor. In some areas, especially data transformation and orchestration, that is a very real advantage.

The second argument is the lower risk of being trapped inside one ecosystem. But this is where simplifications become dangerous. The open source BI market in 2026 often works in a mixed model: open editions exist alongside paid versions, cloud services, or management layers operated by the vendor. That does not cancel the benefits of open source, but it does show that “no lock-in” is not a binary state.

The third argument is cost. And this is the easiest place to oversimplify. No license fee does not automatically mean a lower total cost. If a company operates its own analytical database, integrations, monitoring, backups, upgrades, and high-availability environments, the cost comes back in another form: team time, infrastructure, and greater operational complexity.

The fourth argument is flexibility. It is true, but only when the organization has a real reason to use it. If the needs are standard and the team is small, a very flexible setup may turn out to be simply a more expensive way to achieve the same result.

Security, data sovereignty, cost, and AI

Security

Self-hosting can provide greater control over networking, access policies, and where data is processed. But that does not automatically make the system more secure. That advantage still has to be delivered operationally through fast patching, monitoring, backups, change control, and incident response.

In other words, open source can increase control over security, but it does not remove responsibility for security. In many organizations, that is the line between a good idea and an expensive illusion.

Data sovereignty

This is another area where simple slogans do not help. Storing data in Europe or inside your own infrastructure does not settle the sovereignty question on its own. You still need to distinguish at least a few things: where processing happens, how management is structured, what access the vendor has, what the contractual relationship looks like, and whether international transfers are involved.

That is why tools such as Airbyte can genuinely help with control over where data is processed, but they do not create a universal legal answer by themselves. If the issue concerns data transfers to third countries, the reference point remains the EDPB Recommendations 01/2020. Stronger conclusions require legal analysis, not just a technology statement.

Cost

The most honest answer is: it depends on the type of organization. Open source can reduce licensing costs and offer greater flexibility. However, the full picture also includes implementation, maintenance, DevOps and data engineering expertise, monitoring, support, and the time required to respond to issues.

For a company with a mature technical team and specific requirements, this model can be very cost-effective. For a smaller organization that simply wants to report on data efficiently without building its own platform, the operational overhead can quickly outweigh the apparent savings.

AI

AI genuinely helps with execution work: writing SQL, helper code, tests, documentation, or diagnosing simpler problems. But there is no basis for turning that into a universal promise of faster end-to-end BI delivery.

The evidence is mixed. A study on GitHub Copilot reported a 55.8 percent speed-up in a task focused on implementing a simple HTTP server in JavaScript. By contrast, a METR study on experienced open source developers found the opposite result: on average, work took 19 percent longer when AI tools were allowed.

The practical conclusion for BI is simple. AI can accelerate some execution-heavy work, but it does not replace decisions about the data model, metric definitions, data quality, operational responsibility, or security architecture.

When open source BI makes sense

Open source BI makes sense when an organisation clearly understands why it wants to take control over a specific part of the data platform.

Most often, it is a sensible path when the company:

- has or is building a team capable of operating production systems,

- truly needs more control over architecture, integrations, or where data is processed,

- consciously accepts the additional operational responsibility,

- wants to avoid full dependence on a single vendor ecosystem.

A more managed model is usually a better fit when:

- the team is small and does not want to build its own data platform,

- fast launch and predictable maintenance matter most,

- business-user convenience is the top priority,

- the cost of self-hosting does not create proportional benefit.

In practice, the best results often come from a mixed approach. Some companies choose open source because it brings the most real advantage, for example, in transformation or orchestration. At the same time, they keep more managed solutions where self-operation would be too expensive or simply unnecessary.

In the end, it is worth asking a few simple questions:

- Which parts of our data platform are truly strategic?

- Where do we need more control, and where is a reliable service enough?

- Do we have the skills to operate this setup without losing quality or security?

- Where will open source actually reduce cost or improve fit?

- Which tasks can AI sensibly speed up today and which ones should still not be handed over to automation?

If an organisation can answer those questions honestly, open-source BI stops being a trend or a worldview. It becomes a reasonable project decision.